How to cache dependencies in GitLab

Hi everybody!

Today I want to tell you about my experience of using GitLab CI dependency caching.

Why is it needed

I have a small pet project where I usually experiment with new technologies and approaches. The repository of this project is stored in GitLab. There I configured CI/CD tasks for testing and deploying a project.

CI-task with testing usually completed in 2 minutes. But every time I thought about what actions are being performed in this time. An example is installing Python dependencies.

On one hand, this guarantees reproducible builds (let’s say hello to leftpad and mimemagick 😄).

But on the other hand, these actions are performed every time when I push changes to the repository. And that’s just a pet project.

Let’s try to enable caching 🤟

Here is an official GitLab documentation about CI caching with examples - https://docs.gitlab.com/ee/ci/caching

The project on which I tested CI-caching is written on Django and uses poetry for dependency and virtual environments management.

What .gitlab-ci.yml looked like before the changes

1 | stages: |

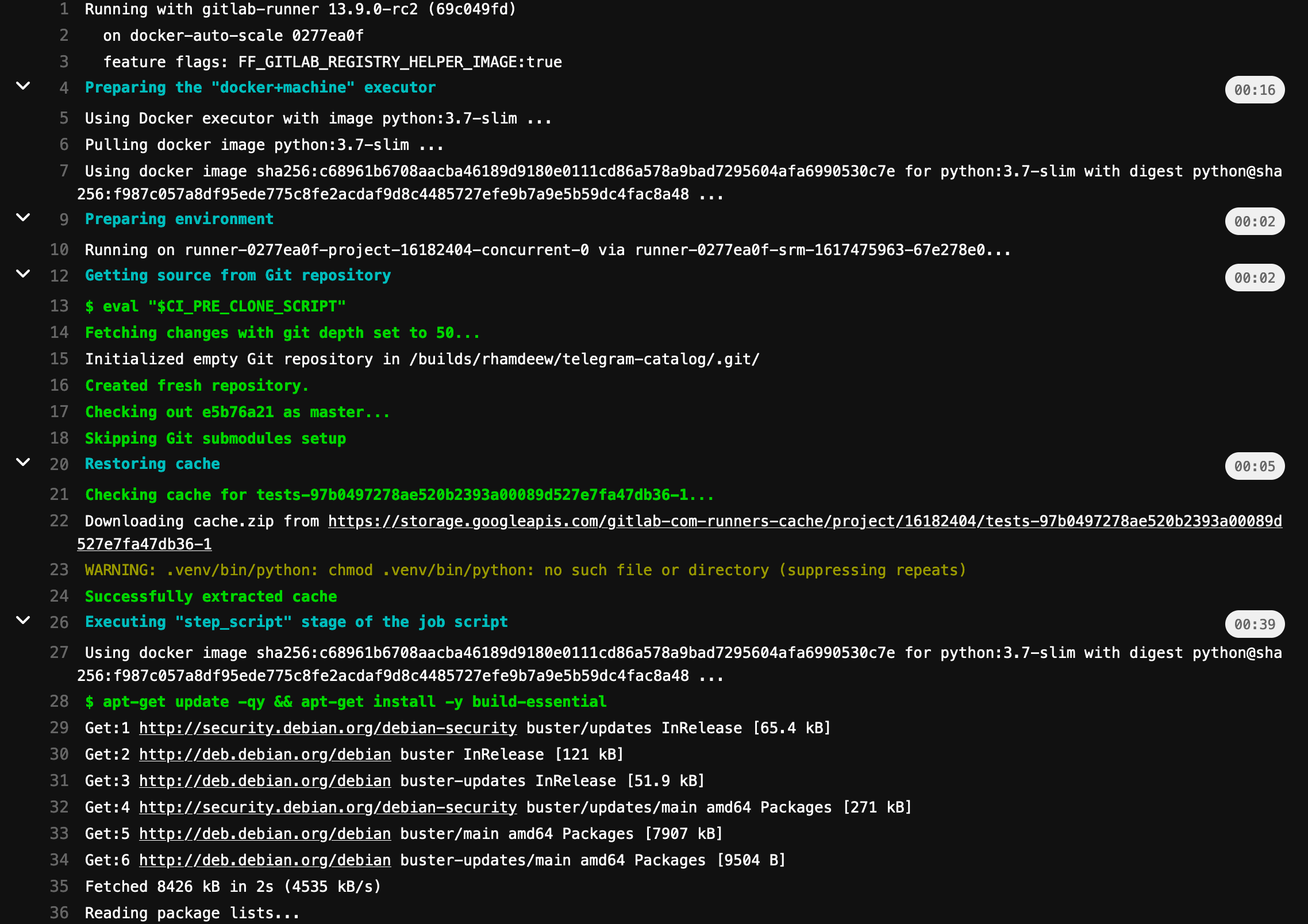

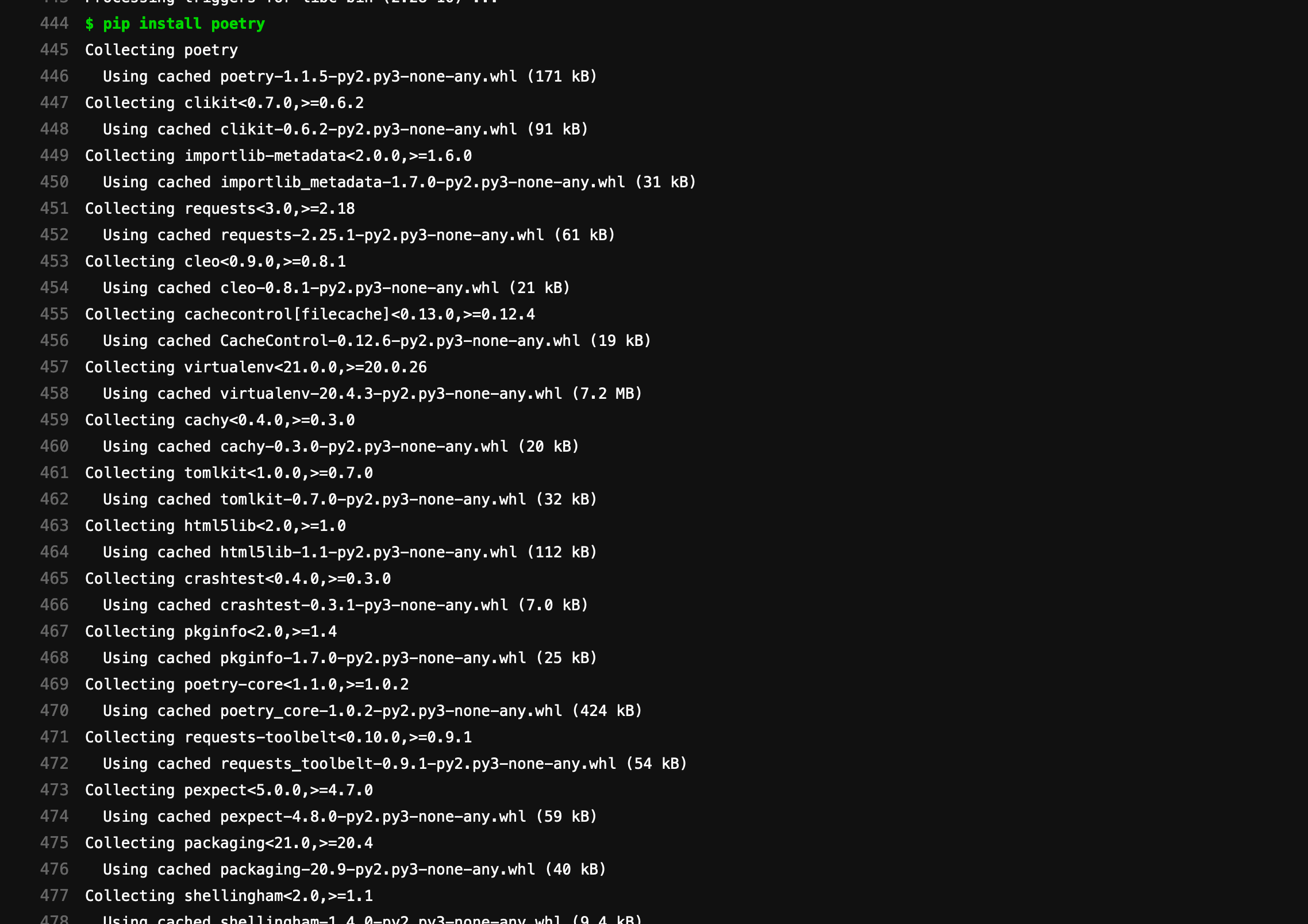

Here We install Debian packages and then install poetry through pip and install project dependencies with poetry.

What .gitlab-ci.yml looks like after the changes

1 | stages: |

I added some settings to tell pip and poetry where packages should be stored. Then I added ‘cache’ section and set poetry.lock and .gitlab-ci.yml files as key for cache.

This means that if at least one of the files is changed then packages should be installed from PyPI, but in another case will be used cached directories with already installed packages.

Results

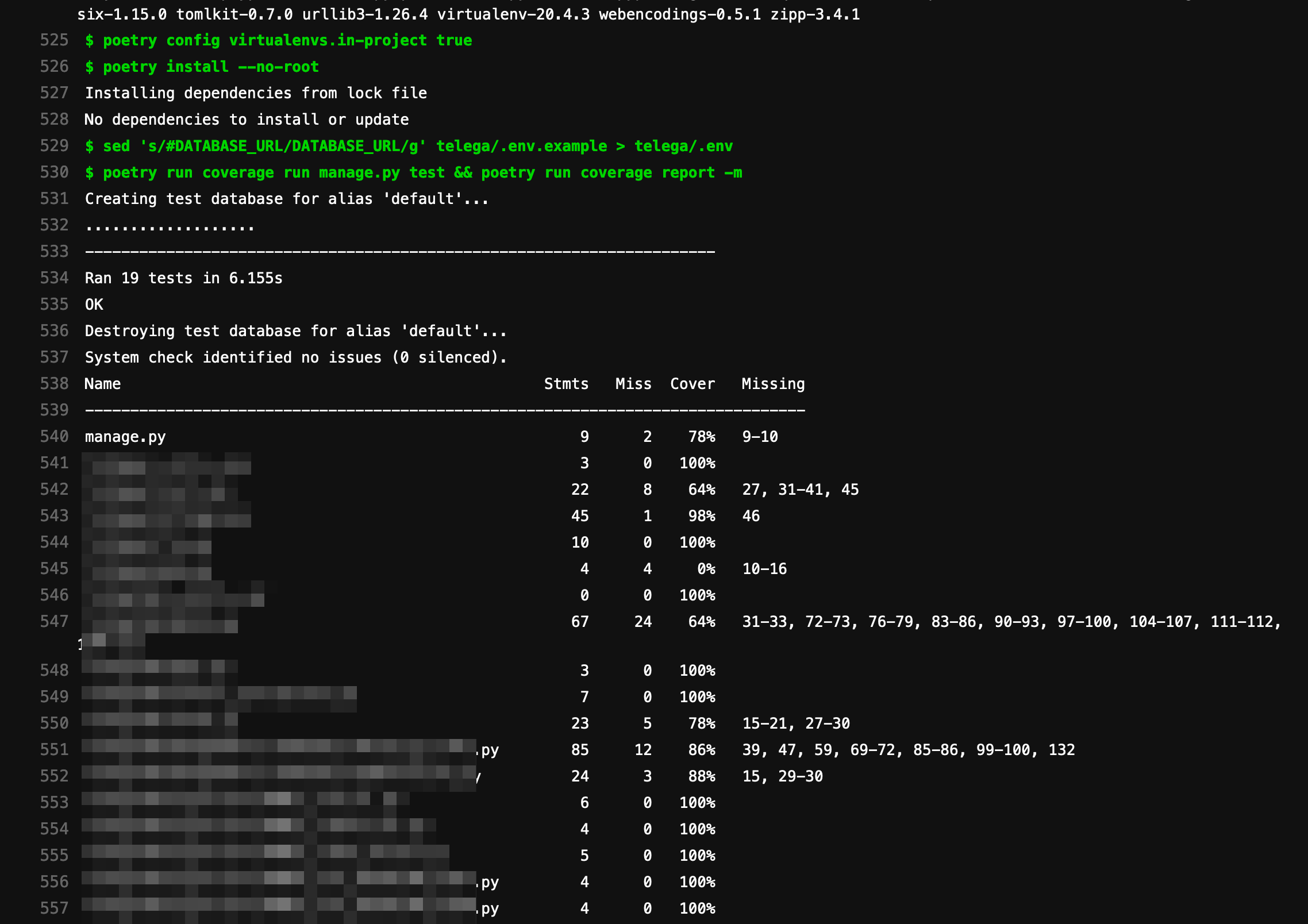

CI-task running time is decreased from 2 minutes to 1 minute. Of course, the checking and unpacking cache operation was added, but it’s still faster than installing dependencies from PyPI.

On the screenshot with the task logs, we can see how pip use the cache.

And here We can see that the poetry did not install anything new.

Cache dependencies in GitLab CI/CD are a powerful tool for faster-running tasks and the economy of resources.